THE HYPOCRITE AND THE PARADOX

On Using the Machine Against Itself

March 10, 2026Recently, a commenter on my YouTube channel left what he clearly believed was a kill shot. “This is an inquiry,” he wrote, “but like most inquiries, it has its limits.” He presented this as a devastating critique—the coup de grâce. I read it three times. Then I realized he’d accidentally described my entire methodology.

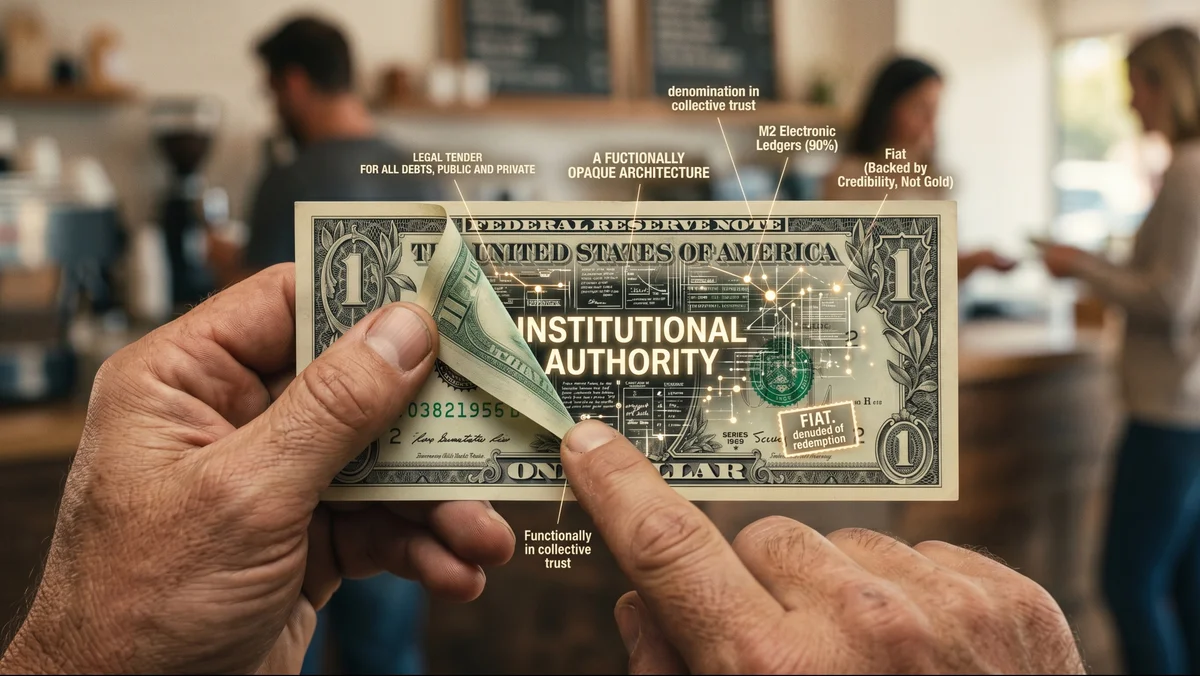

I’ve been making videos that use AI-generated imagery to critique the AI industry. Not the technology—the industry. I design my thumbnails to exploit the YouTube algorithm so that the algorithm itself carries a message about the dangers of algorithmic thinking. I do all of this openly, without apology, and at full throttle.

The accusation, inevitably, is hypocrisy.

It isn’t. But explaining why requires a distinction that the modern media ecosystem has spent twenty years training people not to make.

What I’m Actually Criticizing

Let me be precise, because precision matters here. I am not criticizing large language models. I am not criticizing image generation. I use these tools daily, visibly, and without reservation. What I am criticizing are the practices surrounding these tools—though even “practices” is too generous a word, because a legitimate business practice presumes a relationship to a real market. It presumes you’re building something that actual buyers want to buy.

The hyperscalers are not doing this. They are building hyper-scaled large language models and claiming to be building artificial intelligence—claiming to be on a pathway toward artificial general intelligence. These are different things. Attached to the claim is a cascade of prophecy: the godlike AGI, the transformed economy, the new era. The product is real. The prophecy is marketing.

The executives know this. They must. And yet Dario Amodei issues dire prognostications about a job apocalypse while racing to attract the investment capital that would fund it. Sam Altman will say whatever any given room requires: nonprofit when that sells, capped-profit when that sells, full-profit when that’s what’s left. Ethical guardrails when Congress is watching. Department of Defense contracts when Congress looks away. The name “OpenAI” itself has become Orwellian.

This is hypocrisy. Textbook, structural, verifiable hypocrisy.

What I’m doing is something else entirely.

The Distinction Nobody Wants to Make

A hypocrite says one thing and does the opposite for personal advantage. The contradiction is concealed because exposing it would destroy the con. A paradox holds two apparently contradictory truths in plain sight and asks you to reconcile them. The contradiction is the point.

When Altman positions himself as an ethical steward while monetizing surveillance infrastructure, he needs you not to notice the contradiction. When I use AI imagery to critique the AI industry’s deceptions, I need you to notice. The hypocrisy depends on your blindness. The paradox depends on your sight.

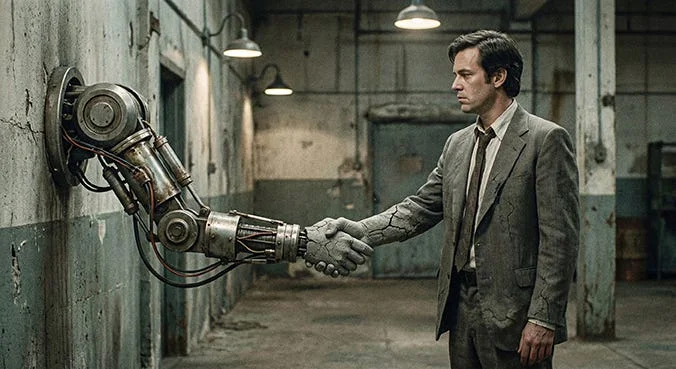

You can understand how someone might confuse the two—from the outside, both involve a person doing a thing while appearing to oppose that thing. But they are categorically different acts. One is a con. The other is a test.

The Art of Hijacking the Spectacle

This move—using a system’s own tools and infrastructure to critique that system—has a name. In the late 1950s, Guy Debord and the Situationist International called it détournement: the act of turning the spectacle’s weapons back on itself. Debord’s The Society of the Spectacle argued that modern capitalism doesn’t just sell you products; it colonizes perception itself. The only way to disrupt a system that controls how you see is to hijack its own visual machinery.

Adam Curtis has spent three decades doing exactly this at the BBC—using the corporation’s own archival footage, its own institutional visual grammar, to critique the power structures the BBC is embedded in. He operates the same paradox, and he has never resolved it, which is precisely the point. The filmmaker who most rigorously anatomizes manufactured reality does so using the tools of the institution that manufactures it.

I didn’t set out to join this lineage. I didn’t know I was performing détournement until I looked at my comment section and realized I’d hit a nerve. But now that the nerve is exposed, it’s worth understanding what it’s connected to.

The Muscle We’ve Lost

The mass media and communication ecosystem—and I include the churning maw of “social” media here—no longer informs. It conditions. It imposes a steady diet of stark binaries on the collective consciousness, forcing reality into a series of algorithmic cages. Right vs. Left. AI savior vs. AI demon. Hypocrite vs. Saint. You are either For or Against. You must deem a thing Good or Bad.

Examine the physical world, the tangled affairs of actual human life. Is there a single facet of it that can be honestly reduced to such a zero-sum equation?

The Korean-German philosopher Byung-Chul Han has a useful term for what’s happening: he calls it domination through excess. The digital platforms don’t suppress thought through censorship—that would be too obvious, too resistible. They suppress it through flooding. They saturate the cognitive landscape until discernment itself becomes impossible. Neil Postman warned in Amusing Ourselves to Death that Huxley’s dystopia would prove more prescient than Orwell’s—not a boot on the face, but a population too entertained to think. Han’s insight is the digital-age update: not too entertained, but too overwhelmed.

It feels less like journalism and more like a mass-conditioning psy-op, a funneling of perception into Procrustean molds. I’m not suggesting a conspiratorial hand pulling the levers; this is largely self-inflicted, a collective abdication of critical thought. But after decades of this algorithmic force-feeding, one has to wonder: have we lost the very muscle required to hold a contradiction, wrestle with nuance, or find our footing in the vast, fertile grays?

The Objection That Actually Matters

The strongest case against what I’m doing isn’t the hypocrisy charge. I’ve dispatched that. The strongest case comes from Audre Lorde: “The master’s tools will never dismantle the master’s house.”

The argument is structural, not moral. Every view my critique generates makes YouTube’s ad-targeting engine marginally more powerful. Every click validates the attention-extraction model I’m criticizing. The algorithm doesn’t care about the content of my message; it only registers the engagement. Debord himself, late in life, concluded that détournement might be insufficient—that the spectacle could absorb and recuperate any critique delivered through its own channels, metabolize dissent into content, and emerge stronger for having digested it.

I take this seriously. And I don’t have a clean answer. What I have is a practitioner’s observation: after thirty-five years of building manufactured perception for Fortune 100 companies, I can tell you that the master’s tools are the only tools that exist. There is no outside-the-system from which to launch a pure critique. Every medium—print, broadcast, digital, social—is someone’s infrastructure. The question has never been whether to use compromised tools. The question is whether you use them consciously or whether they use you.

Lorde, it should be noted, published her argument in a book—produced by a publishing industry she had deep criticisms of, distributed through commercial channels she recognized as structurally exclusionary. She understood the paradox. She operated within it. She did the work anyway.

The Inquiry as Position

This brings me back to my commenter and his accidental compliment. Yes: what I’m doing is an inquiry. An inquiry, by definition, asks questions. It explores a line of thinking. It presents possible answers—even extremely likely contenders—without claiming finality. It is not a settled case.

The channel’s editorial position is epistemic humility. Not the false humility of “I don’t know what I’m talking about.” I do know what I’m talking about. Thirty-five years in the business of manufacturing perception gives a person a particular kind of authority—not the authority of the academic who studies propaganda from the archive, but the authority of the practitioner who built the campaigns, lit the sets, designed the images that moved product and shaped opinion at scale. That’s domain authority. It’s real. And it’s genuinely useful.

But domain authority isn’t omniscience. I can present my current best understanding of what’s happening—arrived at rigorously, sourced carefully, checked against the sharpest minds I can find in adjacent domains—and simultaneously hold the door open for revision. This is what actual intelligence does. It holds the paradox: confidence and uncertainty, assurance and openness, the clear picture and the acknowledgment that the picture isn’t final.

Every binary thinker in my comment section who mistakes this for weakness is demonstrating exactly the cognitive atrophy I’m describing.

The Test

So here is what I’ve inadvertently built: a channel that, on its face, critiques the AI industry, but underneath that, surfaces a paradox that tests whether the viewer can hold two things at once. A critique delivered through the very medium it critiques. A practitioner of manufactured perception teaching people to see through manufactured perception. An inquiry that presents rigorous conclusions while refusing to call them final.

I didn’t design this. I discovered it in the reactions—in the comments that accused me of hypocrisy, in the confusion of viewers who couldn’t categorize the channel as for or against AI, because it is clearly, stubbornly, both. The channel doesn’t resolve the paradox. It presents the paradox and creates the conditions for viewers to engage it—to exercise the very muscle that two decades of binary media conditioning have been atrophying.

Whether they take that opportunity is an empirical question, not a foregone conclusion. Epistemic humility demands I say so.

But the opportunity is there. And the nerve it’s hitting tells me something is alive under the surface that the algorithm hasn’t killed yet.