THE OBELISK IN THE ROOM

On tools, paradox, and what it means to fight the machine from inside the machine.

March 3, 2026by Julian Whatley | The Architecture of Perception

In the spring of 1994, a cinematographer named Vilmos Zsigmond sat down for an interview with American Cinematographer magazine and said something that I’ve never been able to shake. Zsigmond was one of the giants — he’d shot McCabe & Mrs. Miller, The Deer Hunter, Close Encounters of the Third Kind. He’d spent his entire career coaxing light and shadow into meaning, building the visual grammar that helped define what American movies looked and felt like for a generation. And what he said, in that interview, was surprisingly simple: “The camera is just a box. What matters is what you put in front of it.”

I was in my early thirties when I read that. I’d been working in the camera department myself for about a decade at that point — Hollywood blockbusters (including Maverick with Vilmos), commercials, music videos — and I thought I understood what he meant. I thought he was making a point about the primacy of vision over technology. That the machine doesn’t matter; the artist does.

I was right. But I was also missing about half of what he was saying.

What I understand now, thirty years later, is that Zsigmond was making a deeper argument about the relationship between tools and intelligence. He wasn’t saying the camera doesn’t matter. He was saying that the camera is an extension of thought — that it has no meaning independent of the mind directing it. The camera doesn’t see. You see, and then you use the camera to show other people what you saw. The tool executes the vision. The vision doesn’t come from the tool.

I’ve been thinking about Zsigmond a lot this week. Because something has happened that’s forced me to think very carefully about tools, and vision, and what it means to use the instruments of a system you’re also trying to critique.

WHY DOES ANY OF THIS MATTER?

Seven days ago, I had about 250 subscribers on my YouTube channel and a best-performing video with roughly 1,800 views. These were not numbers that suggested I was about to become anyone’s primary source of information about anything. I was making content I believed in, building toward something I couldn’t yet fully see, operating on the faith that if the work was good enough, the right people would find it eventually.

Then a video I made about the physics of the AI bubble — the structural mechanics of why the whole thing is likely to collapse under its own weight — crossed 150,000 views in about 75 hours. My subscriber count crossed 13,000. The comments section filled with hundreds of messages from people who felt, many of them for the first time, that someone was saying clearly what they’d been sensing but couldn’t articulate.

I’m going to be honest with you: this has been overwhelming. Not in a way I’m complaining about. But in a way that demands something of me — a kind of accountability to the community that suddenly materialized around this work. Because a community isn’t just an audience. It has its own intelligence, its own expectations, and its own legitimate demands on your integrity.

And a significant portion of that community had a question. A sharp one. A fair one.

They wanted to know why I was using AI-generated images in videos that are, in substantial part, critical of the AI industry.

Before I answer that — and I’m going to answer it properly, not defensively — I need to tell you something about how perception actually gets manufactured.

WHO AM I TO BE SAYING ANY OF THIS?

I spent almost forty years inside the machinery that builds it. Not studying it from the outside, not theorizing about it from a university department, but doing the work — building visual campaigns for Fortune 100 companies, shooting award-winning music videos for platinum recording artists, working on films that played at Sundance. One that even won the grand jury prize. The specific clients and awards aren’t the point. The point is that when you spend that long helping organizations construct the images of themselves that they want the world to believe, you develop a very particular kind of literacy. You learn to read the gap between what something is and what it’s been made to appear to be.

That gap, it turns out, is where almost everything interesting lives.

And one of the things you learn, working in that gap for four decades, is that the single most powerful tool in the perception engineer’s arsenal isn’t a camera or a lighting rig or a particular piece of editing software. It’s a concept. Specifically, it’s the concept of the tool itself — because the moment you understand that everything in your working life is a tool, including the ideas, including the images, including the narratives you’re constructing, you stop being mystified by any of it.

Oil paint is a tool. A motion picture camera is a tool. The RED digital motion picture camera, when it arrived, was a tool that older cinematographers regarded with the same suspicion now being directed at AI image generators, for roughly the same reasons. Every generation of visual communicators has had its version of this argument. And every generation, without exception, has eventually arrived at the same conclusion: the tool is not the artist. The tool executes the vision. The vision comes from somewhere else.

ISN'T USING AI TO CRITIQUE AI JUST HYPOCRISY?

So when people in the comments point out — correctly — that I’m using “AI” (specifically a large language model called Nano Banana) to generate the images that accompany my critiques of the AI industry, my first instinct is not defensiveness. My first instinct is to explain what’s actually happening, because I think the confusion is revealing in ways that go beyond the immediate question.

Here’s the thing about those images. I’m not pressing a button and accepting whatever a machine decides to show me. I’m writing detailed, specific, conceptually precise prompts describing the exact visual argument I need a particular image to make. The concepts in those prompts are entirely my own — extensions of the ideas I’m developing in each video, made visible through a tool that’s capable of rendering them with something close to the precision I’d bring to a traditional production.

This distinction matters enormously. Because what’s generating those images isn’t “artificial intelligence” in any meaningful sense of that phrase — and the phrase itself is a piece of manufactured perception worth examining. It’s a large language model responding to instructions. The model doesn’t have ideas. I have ideas. The model executes them. And that’s a relationship I understand very well, because it’s structurally identical to the relationship between a cinematographer and a camera — or, for that matter, between a director and a cinematographer.

Now, here’s the practical reality that I don’t think is obvious unless you’ve spent serious time doing this kind of work: a talking head is a severely limited vehicle for conveying dense, abstract ideas. The human brain processes language and imagery through different cognitive channels, at different speeds, with fundamentally different retention characteristics. When I’m trying to explain the thermodynamics of a capital bubble, or the mechanics of how manufactured consensus spreads through a population, I need images that are doing precise conceptual work. Not decoration. Not illustration. Argument.

Stock photography can’t do this. There’s no image in any library on earth that corresponds to the specific informational need of a specific argument I’m making on a specific day. The images I generate through carefully constructed prompts are the closest available approximation to what I’d produce if I were directing a full production specifically designed to visualize these ideas. They’re not perfect. But they’re doing the job.

So yes. I’m using AI to critique AI. And no — that’s not a contradiction. Let me tell you why.

IF THE SYSTEM IS THE PROBLEM, HOW DO YOU FIGHT IT WITHOUT BECOMING PART OF IT?

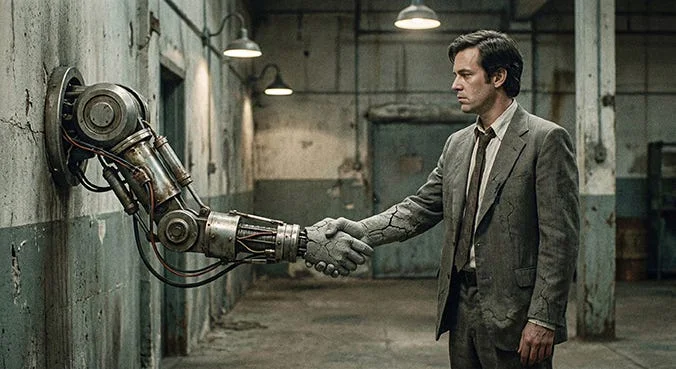

In 1991, James Cameron released a film called Terminator 2: Judgment Day. It’s the one where John Connor, the future leader of the human resistance against a machine uprising, has managed to do something remarkable: he’s captured a T-800 — a Terminator, a machine built specifically to destroy human beings — and reprogrammed it to protect humanity instead. He’s taken Skynet’s own weapon and turned it against Skynet.

I’ve been thinking about that film a lot lately, for reasons that have nothing to do with robots.

We’re living inside what I can only describe as an informational blizzard. A near-total saturation of the cognitive environment with disinformation, manufactured urgency, and narrative that’s been engineered not to inform but to capture attention through fear. The architecture of that system is sophisticated, extremely well-funded, and moving at a velocity that no individual human being operating with purely analog tools can match. The platforms, the algorithms, the content factories — they’re all optimized for the same thing: maximum engagement, achieved most efficiently through maximum anxiety.

To produce content of sufficient quality, at sufficient velocity, to actually cut through that noise and reach people who are trying to orient themselves — that requires using tools that are capable of matching the system’s speed. I’m using the weapon of the machine to fight the machine. I’m doing it consciously, with complete awareness of the irony involved, and I’m doing it because the alternative isn’t some kind of principled purity. The alternative is irrelevance.

John Connor didn’t refuse to use the T-800 because Skynet built it. He used it because it was the right tool for the mission.

But here’s what I’ve been sitting with, and what I think is actually the more interesting question underneath all of this.

WHY ARE PEOPLE QUESTIONING WHETHER I'M EVEN REAL?

The criticism of my image choices — the “you’re using AI to critique AI” observation — appears in the comments alongside something else. Something I didn’t expect, and that I find genuinely revealing.

A surprising number of people have speculated — seriously, not rhetorically — about whether I myself am AI-generated. Whether Julian Whatley is a real person, or a constructed persona, or some kind of synthetic media artifact deployed to simulate the kind of critical analysis that real humans produce.

I want to be clear: I’m not AI-generated. I’m a flesh-and-blood human being with a verifiable professional history, a body of work that exists in the physical world, and — trust me on this — far too many intractable opinions to have been assembled by a language model. I take no offense at the question. But I think the fact that the question is being asked, sincerely, by a meaningful number of people, tells us something important about where we are.

WHAT DOES A STANLEY KUBRICK FILM HAVE TO DO WITH ANY OF THIS?

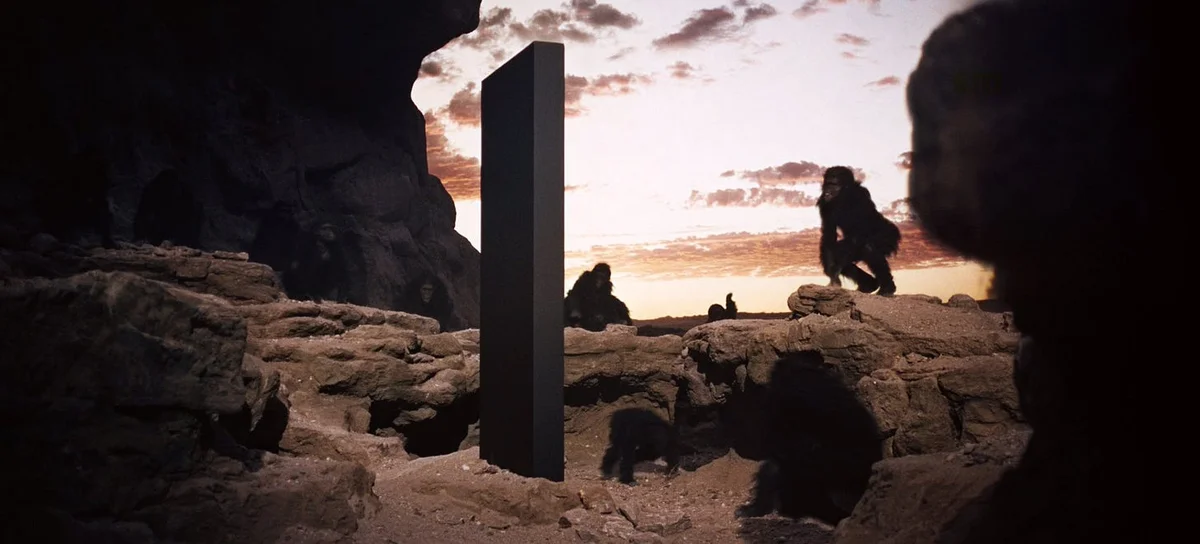

In the famous opening sequence of Stanley Kubrick’s 2001: A Space Odyssey, a group of early humans encounter a perfectly black monolith. It’s appeared in their landscape without explanation — no context, no precedent, no category in their experience that could contain it. And their response is awe braided with terror. They approach it tentatively. They touch it. They recoil. They circle it. They do not know what to do with something that doesn’t fit.

The obelisk appears again later in the film, excavated on the moon by a civilization vastly more sophisticated than those early humans. And the response is, structurally, identical. More measured in its expression, more articulate in its language. But underneath: the same disorientation. The same encounter with something that exceeds the available frameworks for understanding.

I think that’s where we are with large language models. They’ve arrived on this planet — and I use “arrived” deliberately, because there’s something almost extraterrestrial about the speed and scale of their appearance — with capabilities that our existing cognitive and cultural frameworks aren’t equipped to process. And the fear response they’re generating isn’t irrational. It’s the entirely appropriate neurological response to an encounter with something genuinely unprecedented.

What I find significant is this: the willingness to ask whether a person you’re watching on a screen might be artificially generated isn’t paranoia. It’s a rational adaptation to an information environment that has been deliberately destabilized. We’ve reached a point where people are genuinely uncertain about the ontological status of the things they’re encountering. Is this image real? Is this argument real, or is it a sophisticated simulation of an argument? Is this person real?

These are questions that, five years ago, simply didn’t need to be asked. That they now do — and that asking them is reasonable rather than ridiculous — is itself a measure of how profoundly the perceptual landscape has shifted.

This is precisely the territory that this channel exists to navigate. Not by providing a map, because no reliable map exists yet. But by developing the perceptual tools to read the landscape for yourself.

SO WHAT IS IT THAT MACHINES CAN'T DO, THAT WE CAN?

Which brings me to what I think is the deepest point in all of this. The one I want to leave you with.

There’s a capacity that human beings have — one that I’d argue is among our most sophisticated cognitive achievements — that no large language model possesses: the capacity to hold paradox.

What I mean is this: to be aware of two ideas that don’t clearly align with each other, that appear to be in genuine tension or even outright contradiction, and to resist the urge to resolve that tension prematurely. To let the paradox breathe. To stay inside the discomfort. To wait, with patience and discipline, until something deeper and more complex and more true begins to emerge from the friction between them.

A language model, when confronted with a contradiction, resolves it. That’s what it’s built to do — to find the most coherent, most statistically probable reconciliation of competing inputs. It can’t tolerate genuine paradox, because genuine paradox isn’t a problem to be solved. It’s a condition to be inhabited.

I’m using a tool I’m critiquing. I’m inside the system I’m analyzing. I’m, in some meaningful sense, part of the very information environment I’m trying to help people see through. These are real tensions. I’m not going to pretend they aren’t. But I’m also not going to pretend that the existence of those tensions invalidates the work — because that would be intellectually flacid. The premature collapse of a productive paradox into a comfortable binary.

Here’s the thing about paradox: it isn’t a sign that you’re confused. It’s usually a sign that you’re getting close to something true. The most interesting territory in any domain is almost always found in the space where two apparently incompatible things are both, somehow, correct. The capacity to stand in that space without flinching — to remain oriented while sitting inside contradiction — that’s not a weakness of human cognition. It’s one of its most sophisticated features.

And it’s the one that I don’t think any machine, however impressive its outputs, has yet come close to replicating.

SO WHAT WAS ZSIGMOND REALLY TELLING US?

I want to go back to Vilmos Zsigmond for a moment.

“The camera is just a box. What matters is what you put in front of it.”

He was right. And he was also, I now understand, making a subtler point than I initially recognized. Because the thing you put in front of the camera isn’t just a subject. It’s a set of ideas about what’s worth seeing, what’s worth showing, what the world looks like when you’re paying the right kind of attention to it.

The tool matters. The tool always matters. But the tool is always in service of something else — a vision, a question, an argument about what’s real and what’s been constructed to look real. That’s true of a Panavision camera from 1975. It’s true of a large language model in 2026. The technology changes. The relationship between the tool and the intelligence directing it stays the same.

I’m not a guru. I’m not an authority. I’m a fellow traveler who spent 35 years building the kinds of illusions I’m now trying to help you see through — and who’s willing to use whatever tools are available to do that work with the speed and precision this moment demands.

Even the ones that make the argument look, from the outside, like a contradiction.

Especially those.

I’m Julian Whatley. This is The Architecture of Perception. And now you see it.